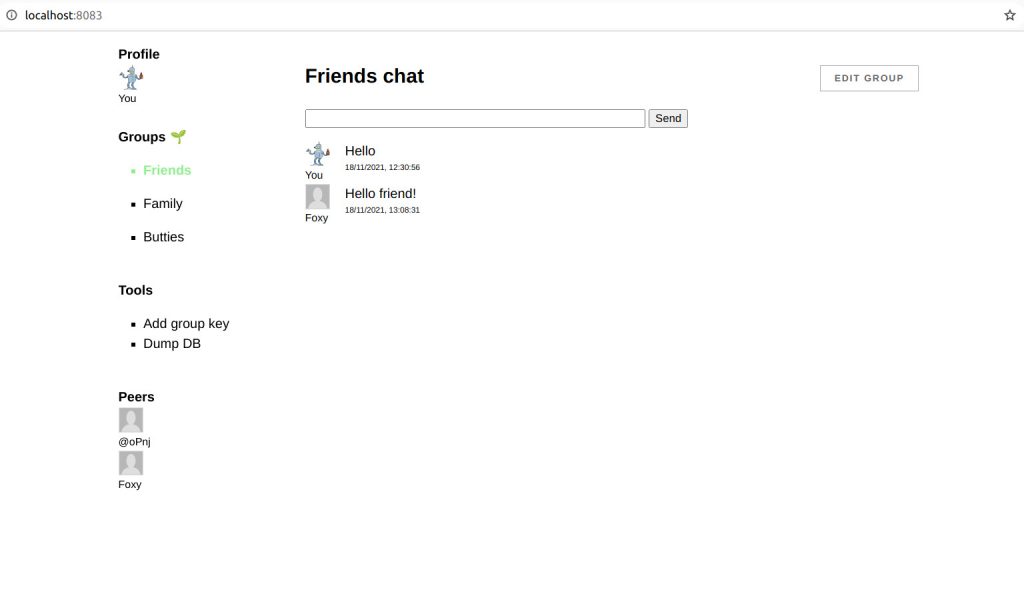

SSB-browser as mentioned in my last blog post runs a SSB client in the browser. In order to communicate with other peer it needs a pub to relay messages through. Furthermore the pub acts as a super node allowing the browser peer to do partial replication without necessarily needing to store the full feeds of peers.

We don’t really gain very much compared to the classical client-server setup if we rely too much on especially a single pub. The browser client can of course run fully off-line, but still when it needs to communicate messages with other peers it needs some intermediary.

In this post I will show how to setup a pub that can be used together with ssb browser. I will also show how this can be used to build a totally seperate network from the main scuttlebutt network for local groups and communities.

First of all one needs to install the basic ssb-server. On my pub I use ssb-minimal-pub-server. The good thing is that this already comes with everything we need for peer invites. So we just need to install the before mentioned ssb-partial-replication module into ~/.ssb/node_modules/. Easiest is just to git clone and npm install it from the directory. It is also recommended to install ssb-tunnel but this is not required.

Lastly we need to configure ssb-server to use the modules and to enable web sockets so the browser will be able to connect.

{

"connections": {

"incoming": {

"net": [{ "port": 8008, "host": "::", "scope": "public", "transform": "shs", "external": ["between-two-worlds.dk"] }],

"ws": [{ "port": 8989, "host": "::", "scope": "public", "transform": "shs", "external": ["between-two-worlds.dk"], "key": "/etc/letsencrypt/live/between-two-worlds.dk/privkey.pem", "cert": "/etc/letsencrypt/live/between-two-worlds.dk/cert.pem" }]

},

"outgoing": {

"net": [{ "transform": "shs" }],

"tunnel": [{ "transform": "shs" }]

}

},

"logging": {

"level": "info"

},

"plugins": {

"ssb-device-address": true,

"ssb-identities": true,

"ssb-peer-invites": true,

"ssb-tunnel": true,

"ssb-partial-replication": true

},

}

As one can see this uses letsencrypt, but that is not strictly needed.

Lastly we need to start up the server. I run it using the following bash script that will make sure the pub keeps running even if it should crash.

#!/bin/bash

while true; do

sbot server --port 8008 >> log.txt 2>&1

sleep 30

done

This is everything you need to run your own scuttlebutt pub that supports browser clients.

Separate network

If one wants to run a totally different network than the main scuttlebutt network one needs to use a different caps key. This means that messages from this network will never appear on the main network. This can generated using using node:

crypto.randomBytes(32).toString('base64')

And can be added to the config file on the pub using:

"caps": {

"shs": "72I4EC/gZUuNffHEwooHob4hhtXHAj0HE5xKM3njRSg="

}

SSB-browser-core needs a little tweak to use the same caps. Change browser.js to:

require('./core').init(dir, {

caps: {

shs: '72I4EC/gZUuNffHEwooHob4hhtXHAj0HE5xKM3njRSg='

}

})

It might also be a good idea to change hops to 2 in the client. In order to bootstrap the network, generate an invite or follow the first user from the command line of the pub in order for messages to start propagating.

sbot publish --type contact --contact '@feedId=.ed25519' --following

That is all you need to run your seperate network 🙂